Pose estimation essentially involves multiple sub-tasks, such as estimating poses in a video, estimate poses in an image with several people, estimate poses at a crowded event, and so on. Human Pose estimation can be performed in 2D or 3D, and its key application lies in the activity recognition, animation, gaming and augmented reality industries. For example, a popular Deep Learning application uses this technology to analyze the movements of a basketball player.

Human pose estimation can be defined as the system of localizing human joints in a video or image, to predict its position or to search for a particular pose amidst all articulated poses.

With proper pose estimation, it becomes possible to track a person or even a group of people in the real world space, at quite a granular level, thereby opening up the possibility of numerous advantageous applications. Right from AI-powered personal trainers and virtual sports coaches to tracking of movement on factory floors to enhance worker safety, human pose estimation has the capacity to develop a brand new wave of automated tools that are designed to measure human movement in a highly accurate and precise manner.

Traditionally, understanding human poses by analyzing images of human beings in various actions was quite difficult due to strong articulations, clothing, light changes, small and barely visible joints, and so on. However, in recent years, with the advancements made in the domain of & Deep learning, & the sphere of & pose estimation& has made some breakthroughs.

Traditionally, understanding human poses by analyzing images of human beings in various actions was quite difficult due to strong articulations, clothing, light changes, small and barely visible joints, and so on. However, in recent years, with the advancements made in the domain of & Deep learning, & the sphere of & pose estimation& has made some breakthroughs.

The introduction of & quot DeepPose & quot the research in human pose estimation gradually began to shift from its more classical approaches to the system of Deep Learning.

OpenPose

OpenPose RMPE (AlphaPose)

RMPE (AlphaPose) DeepPose

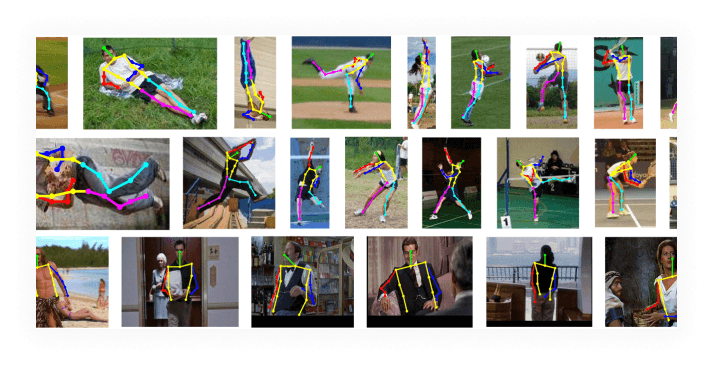

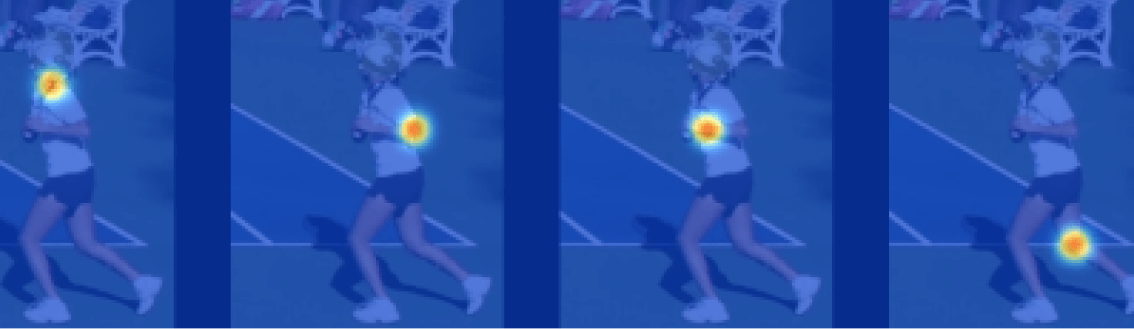

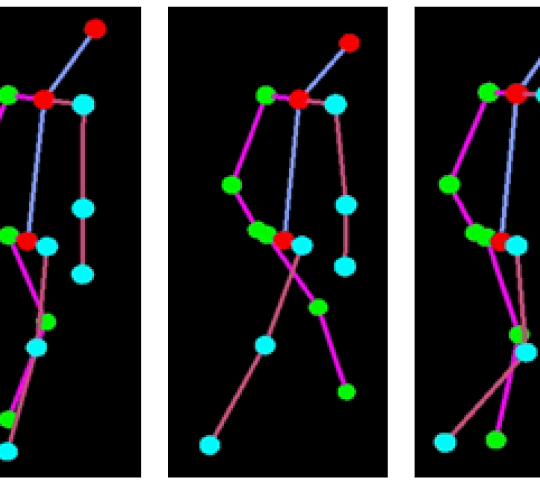

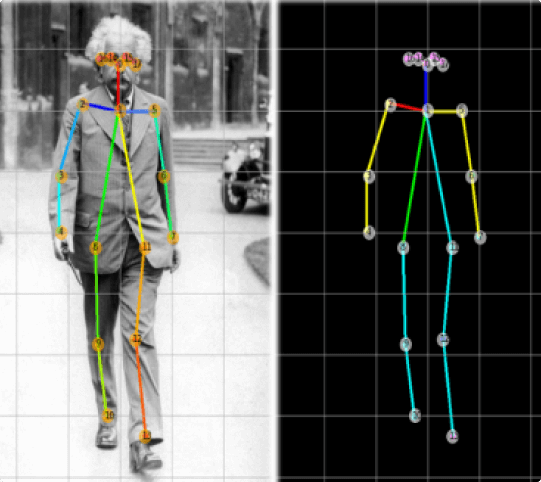

DeepPoseThis is a popular system for multi-person human pose estimation, largely due to its GitHub implementation. OpenPose maintains a bottom-up approach, which basically implies the fact that it detects all parts of every person in an image, and subsequently groups or associates parts belonging to diverse individuals. Firstly the OpenPose network extracts features from an image with the usage of the first few layers, which are then fed into parallel branches of convolutional layers.

The first of these two branches predicts a set of eighteen confidence maps, each of them representing a specific part of the human pose skeleton. While on the other hand, the second branch goes on to predict a set of thirty-eight Part Affinity Fields that acts as a representation of the degree of association between the parts.

The succeeding stages are used to filter the prediction made by each of the branches. With the usage of part confidence maps, bipartite graphs are created between pairs of parts, and the weaker links present in them are pruned with the help of PAF values. By doing so, human pose skeletons can both be estimated and effectively assigned to each person in an image.

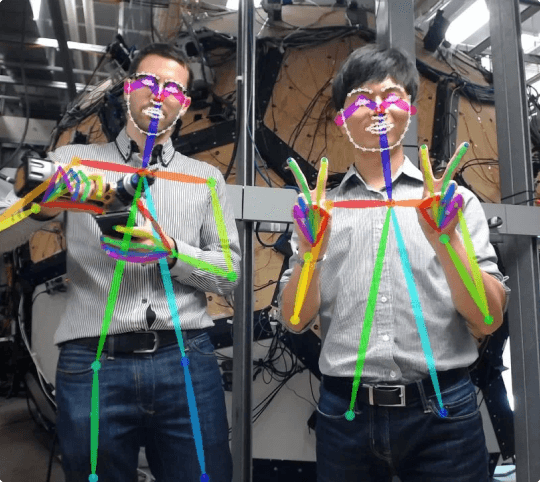

This method follows a top-down approach for pose estimation, which basically implies that a person detector is incorporated first, and then the pose and parts of each person are calculated. Much like other top-down systems, RMPE is also largely dependent on the person detectors accuracy. In this system, pose estimation tends to be performed in the relevant persons location.

As a result, the pose extraction algorithm may perform sub-optimally owing to errors in duplicate bounding box predictions and localization. For the purpose of resolving these concerns, Symmetric Spatial Transformer Network (SSTN) has been proposed by the RMPE authors. SPPE or Single Person Pose Estimator is subsequently used in the extracted area in order to estimate the human pose skeleton for the relevant person.

Subsequent to this, a Spatial De-Transformer Network is made use of to remap the estimated human pose back to the original image coordinate system. Ultimately a parametric pose NMS or Non-Maximum Suppression process is used in order to effectively handle the issue of redundant pose deductions.

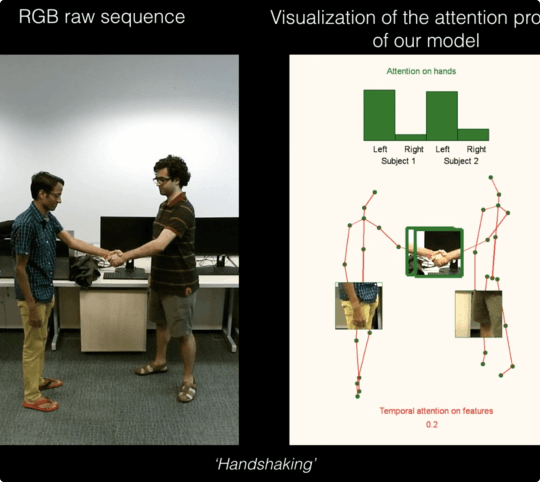

This is among the first major papers that involved the application of Deep Learning to Human pose estimation, and managed to beat various traditional models and achieve SOTA performance. When it comes to the DeepPose approach, the human pose estimation is developed as a CNN or Convolutional Neural Network-based regression issue towards the joints of the body.

It also involves the usage of a cascade of regressors in order to refine the pose estimates and avail better results. Owing to its effectiveness, DeepPose has managed to emerge as one of the most popular methods using the bottom-up approach.

Over the decades, incredible developments have taken place in the domain of human pose estimation, especially in regards to Deep Learning. Various new technologies and techniques have subsequently enabled it to serve the myriad of applications possible with it in a more efficient and effective manner.